Investigating the inner workings of prominent language models involves scrutinizing both their structure and the intricate training methodologies employed. These models, often characterized by their sheer magnitude, rely on complex neural networks with a multitude of layers to process and generate textual content. The architecture itself dictates how information propagates through the network, influencing its skill to comprehend and produce sensible output. Meanwhile, training procedures involve presenting massive datasets of text to the model, allowing it to learn patterns and associations within language.

- The decision of architecture and training methods directly impacts a model's success on various tasks, such as text generation.

- Understanding these fundamental aspects is crucial for both developers seeking to enhance existing models and for individuals who utilize these powerful AI systems.

Major Models: Pushing the Boundaries of Language Understanding

Recent breakthroughs in artificial intelligence have led to the emergence of remarkable language models that are continuously pushing the boundaries of what's possible in natural language understanding. These complex models, such as GPT-3, are capable of executing a wide range of tasks, including producing human-quality text, translating languages, condensing information, and even answering in-depth questions. The potential of these models are limitless, with uses spanning diverse fields, from education to technology.

Scaling Laws for Major Models: Insights from Empirical Studies

Empirical studies have revealed intriguing scaling laws governing the efficacy of major language models. These laws demonstrate a systematic relationship between model size, training data volume, and measured performance on a range of tasks. Notably, larger models tend to exhibit remarkable improvements in accuracy as their size expands, suggesting a strong correlation between model scale and representational power. Additionally, the relationship between training data and performance also follows a scaling trend, with models trained on larger datasets generally achieving higher results. These findings highlight the importance of both model size and data scale in driving model performance.

However, it is crucial to note that scaling alone does not guarantee optimal performance. Architectural choices, training methodologies, and task-specific fine-tuning also play crucial roles in shaping the final outcome.

Future research directions include exploring the limits of scaling, investigating the interplay between model size, data scale, and architectural design, and developing more resource-aware training paradigms for large language models.

Ethical Considerations in Developing and Deploying Major Models

Developing and deploying major models presents ample ethical considerations that demand meticulous attention. One key concern is bias, which can perpetuate existing societal inequities. Models trained on incomplete data may discriminate certain groups, leading to unfair outcomes. It's crucial to combat bias by ensuring that training corpora are representative and inclusive.

Another important ethical consideration is transparency. The decision-making processes of major models can be inscrutable, making it challenging to understand how they website arrive at their conclusions. Fostering transparency through explainable AI can improve trust and accountability.

Furthermore, the potential for misuse of major models is a serious concern. It's vital to establish robust safeguards to prevent these technologies from being used for detrimental purposes, such as creating deepfakes.

Major Models: Applications in Natural Language Processing

Major textual models have revolutionized natural language processing (NLP), enabling a wide array of implementations. These powerful structures, often trained on vast libraries of text and code, demonstrate remarkable capabilities in understanding and generating human language. Some prominent demonstrations include BERT, which excel in tasks such as machine translation. The effect of these models is extensive across various fields, including research. As NLP continues to evolve, major models are poised to revolutionize the way we interact with technology and information.

The Ascent of Large Models

The landscape of artificial intelligence is undergoing a profound shift. Major AI models, characterized by their immense scale, are pushing the boundaries in diverse domains. These cutting-edge systems are {capable ofperforming complex tasks with striking precision. From natural language understanding to computer vision, major models are transforming industries and reshaping our world.

With ongoing advancements in AI research|experts predicta future brimming with groundbreaking innovations in the years to come.

Scott Baio Then & Now!

Scott Baio Then & Now! Brian Bonsall Then & Now!

Brian Bonsall Then & Now! Michael Oliver Then & Now!

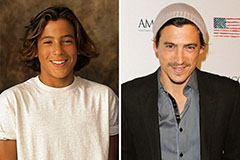

Michael Oliver Then & Now! Andrew Keegan Then & Now!

Andrew Keegan Then & Now! Nadia Bjorlin Then & Now!

Nadia Bjorlin Then & Now!